"Which is better, InBody or AI composition analysis?" is common, but often framed incorrectly.

These tools are not best used as direct competitors. They are better used as complementary systems with different roles.

Why numbers can differ without either tool being "wrong"

InBody and AI approaches differ in methodology and capture context. Exact value matching is not the right success criterion.

The more useful criterion is trend usability:

- can you measure consistently?

- can you interpret direction clearly?

- can you make weekly adjustments from the data?

Role-based comparison

| Category | InBody | AI composition workflow |

|---|---|---|

| Core strength | benchmark precision | frequent execution tracking |

| Typical cadence | monthly | 2-3 times/week |

| Best use case | baseline resets | weekly decision loops |

| Main risk | long gaps between checks | over-focusing on exact values |

This comparison makes implementation clearer than accuracy debates.

Recommended operating model

- use InBody as monthly anchor

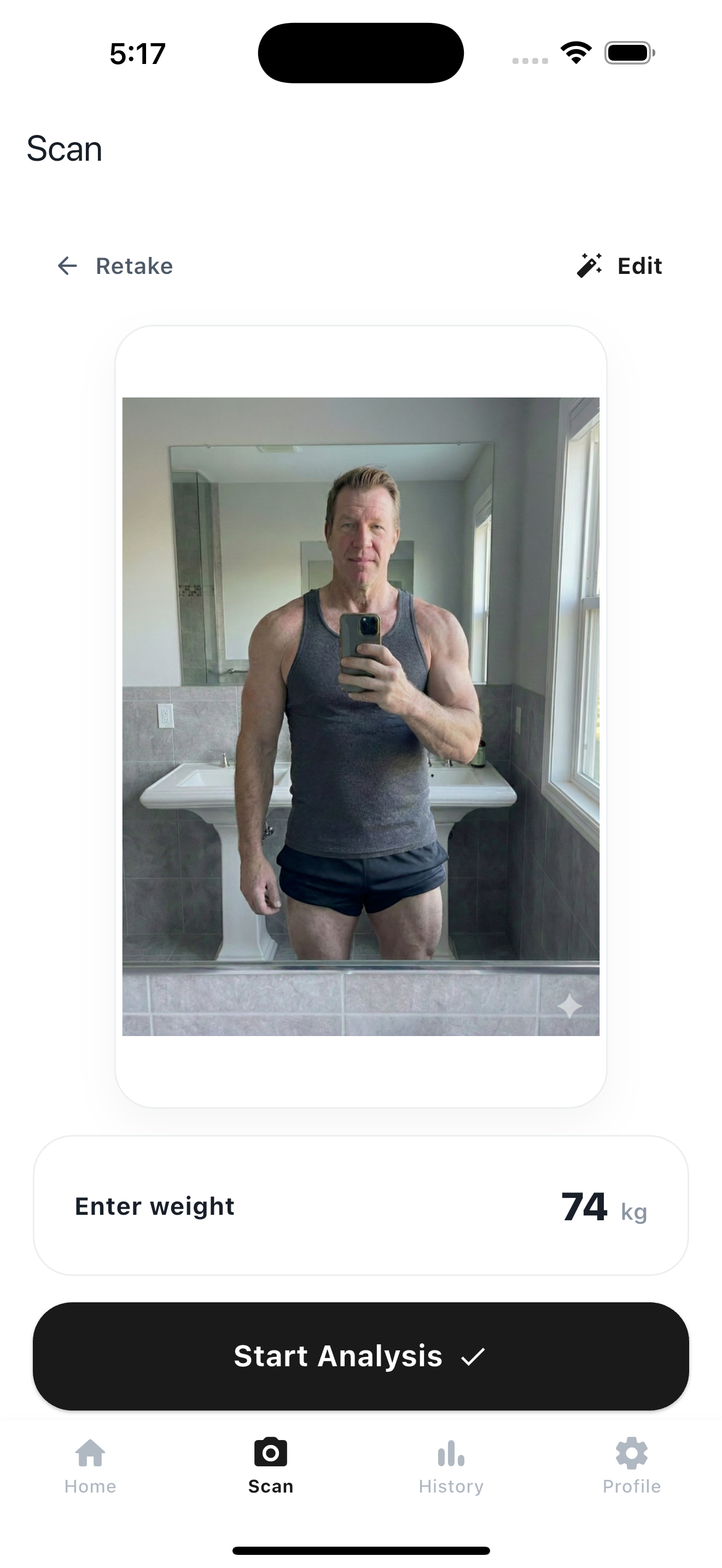

- use Kodebody as weekly trend and execution system

This gives both benchmark confidence and operational continuity.

The winning setup is benchmark precision + execution continuity.

The winning setup is benchmark precision + execution continuity.

Common misuses

- expecting exact cross-tool value matching

- using benchmark checks without weekly operational tracking

- switching tools too often and breaking comparability

- changing strategy based on one outlier point

Bottom line

InBody and AI tools solve different layers of the same problem.

Use InBody for checkpoint precision and AI tracking for repeatable weekly decisions. Most people get better results from this role split than from trying to pick one universal winner.

- Product page: Kodebody

- Related read: How to Read an InBody Report

- Related read: How to Read Kodebody History Graphs

- Related read: How to Track Body Composition Without InBody